- 14.1. Find The Best

SolverConfiguration - 14.2. Benchmark Configuration

- 14.2.1. Add Dependency On

optaplanner-benchmark - 14.2.2. Build And Run A

PlannerBenchmark - 14.2.3. SolutionFileIO: Input And Output Of Solution Files

- 14.2.4. Warming Up The HotSpot Compiler

- 14.2.5. Benchmark Blueprint: A Predefined Configuration

- 14.2.6. Write The Output Solution Of Benchmark Runs

- 14.2.7. Benchmark Logging

- 14.2.1. Add Dependency On

- 14.3. Benchmark Report

- 14.4. Summary Statistics

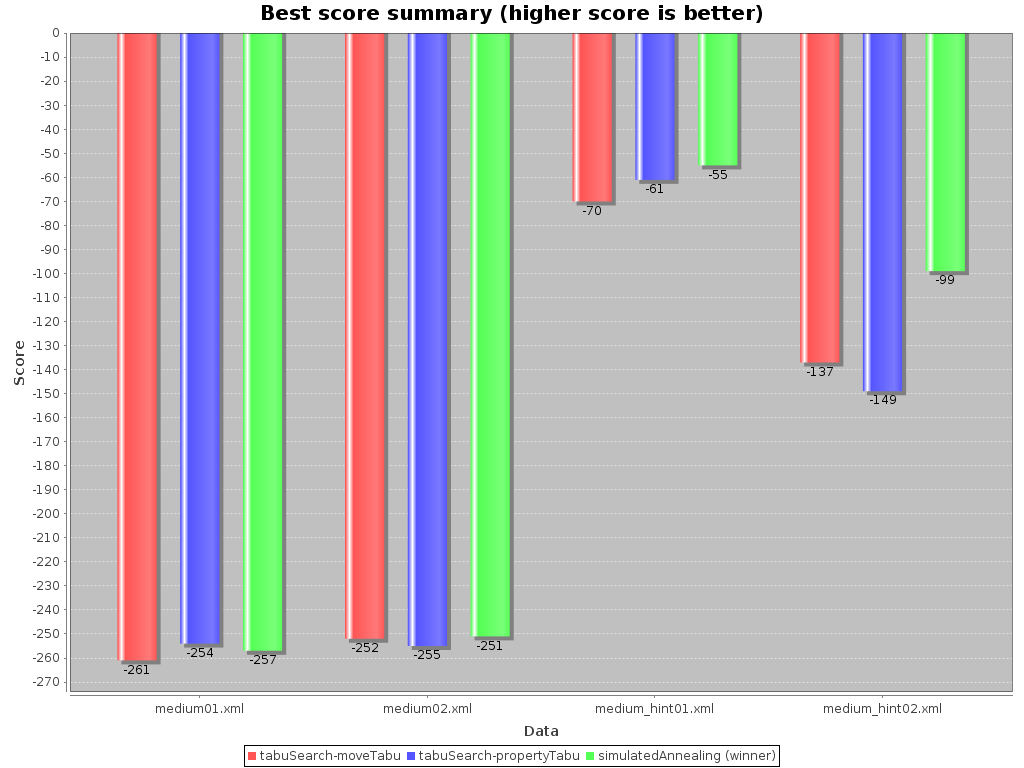

- 14.4.1. Best Score Summary (Graph And Table)

- 14.4.2. Best Score Scalability Summary (Graph)

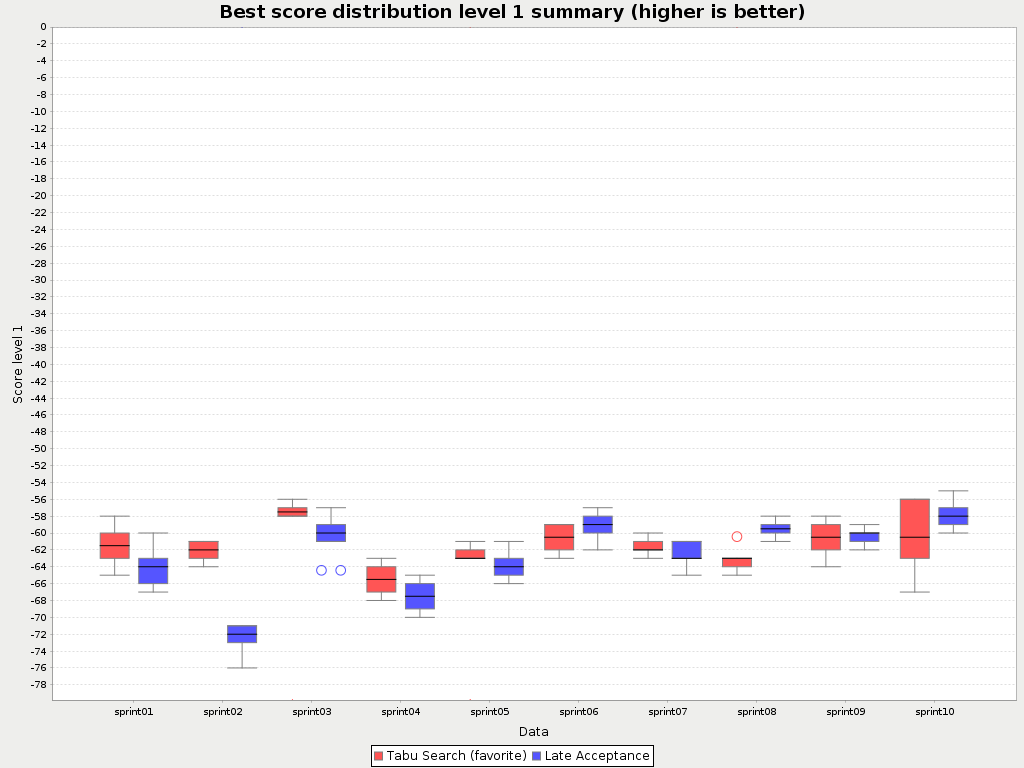

- 14.4.3. Best Score Distribution Summary (Graph)

- 14.4.4. Winning Score Difference Summary (Graph And Table)

- 14.4.5. Worst Score Difference Percentage (ROI) Summary (Graph and Table)

- 14.4.6. Average Calculation Count Summary (Graph and Table)

- 14.4.7. Time Spent Summary (Graph And Table)

- 14.4.8. Time Spent Scalability Summary (Graph)

- 14.4.9. Best Score Per Time Spent Summary (Graph)

- 14.5. Statistic Per Dataset (Graph And CSV)

- 14.5.1. Enable A Problem Statistic

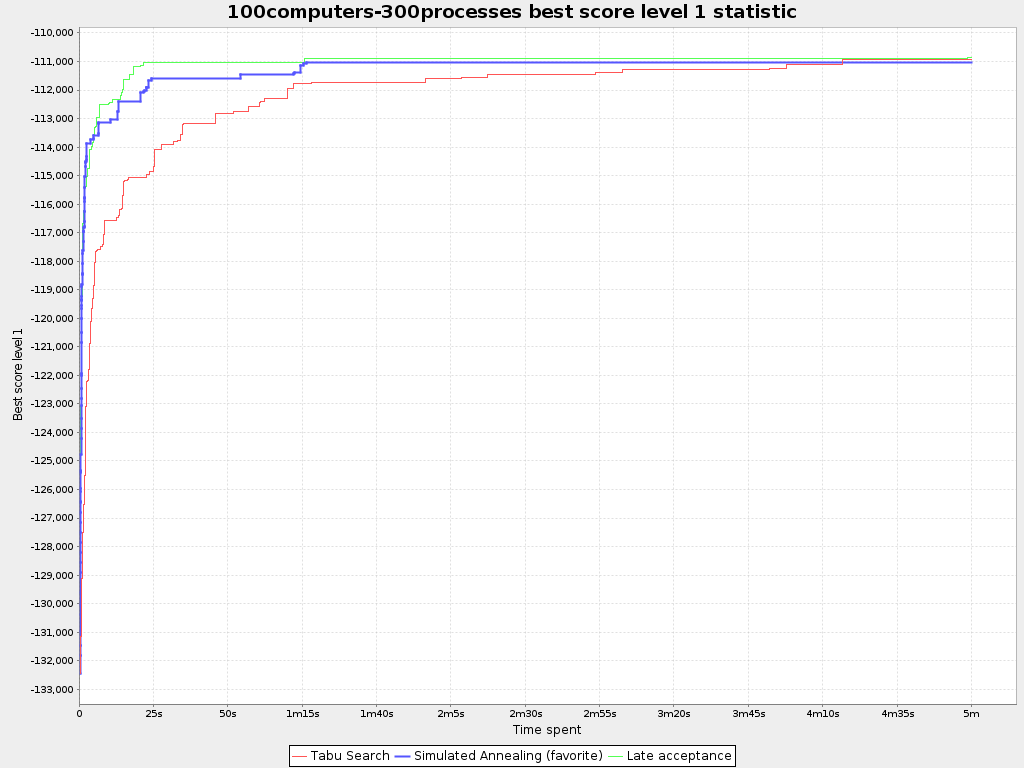

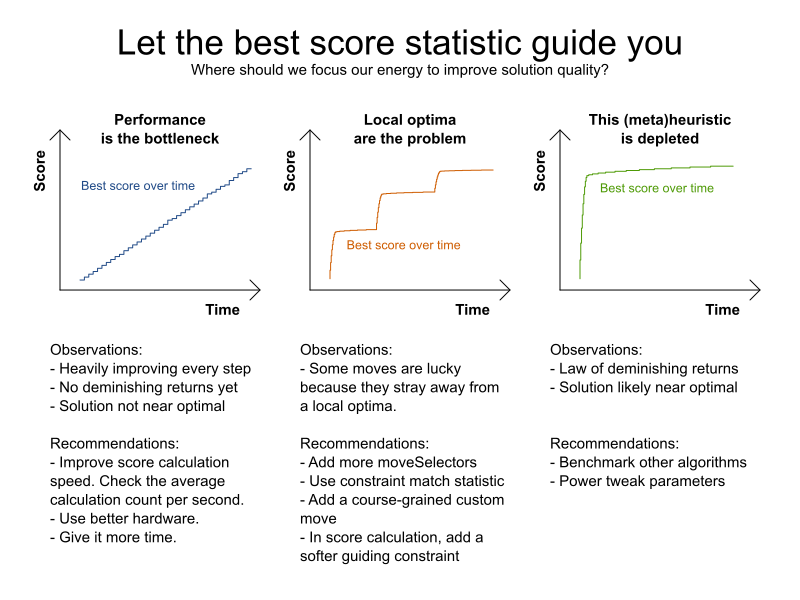

- 14.5.2. Best Score Over Time Statistic (Graph And CSV)

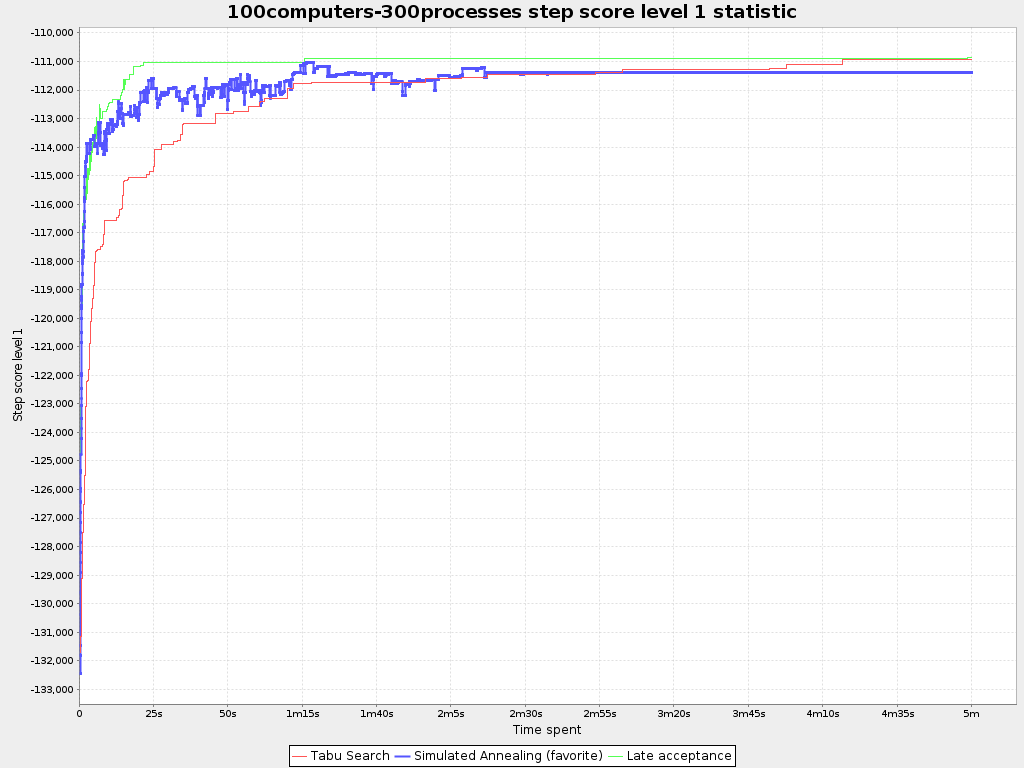

- 14.5.3. Step Score Over Time Statistic (Graph And CSV)

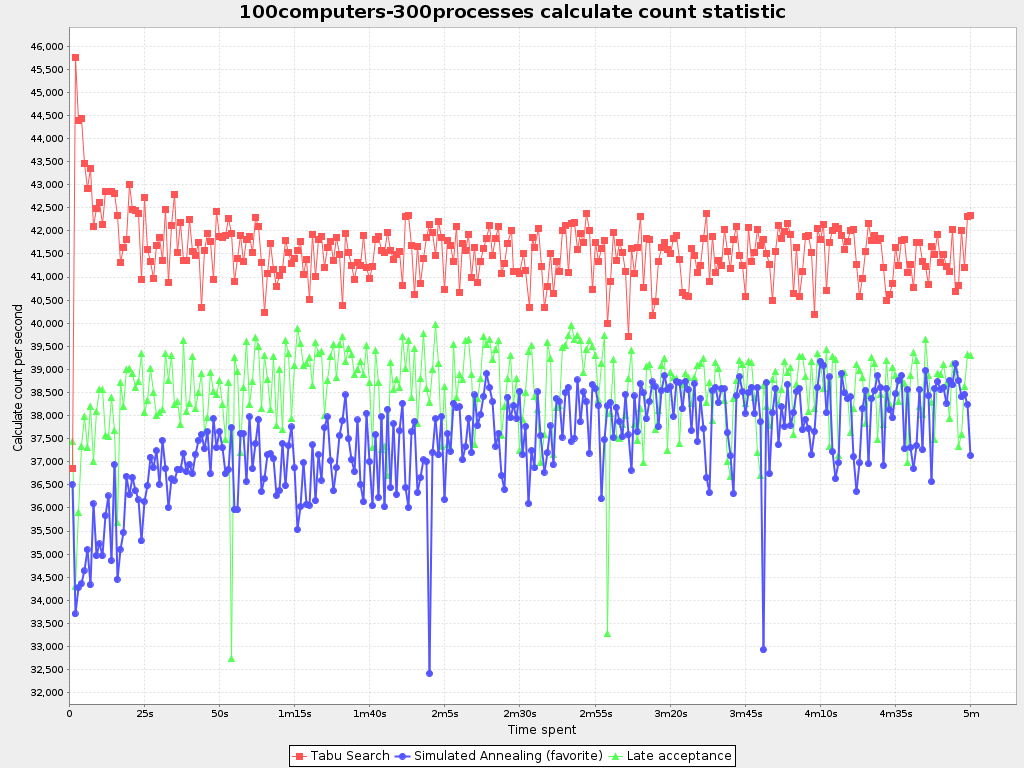

- 14.5.4. Calculate Count Per Second Statistic (Graph And CSV)

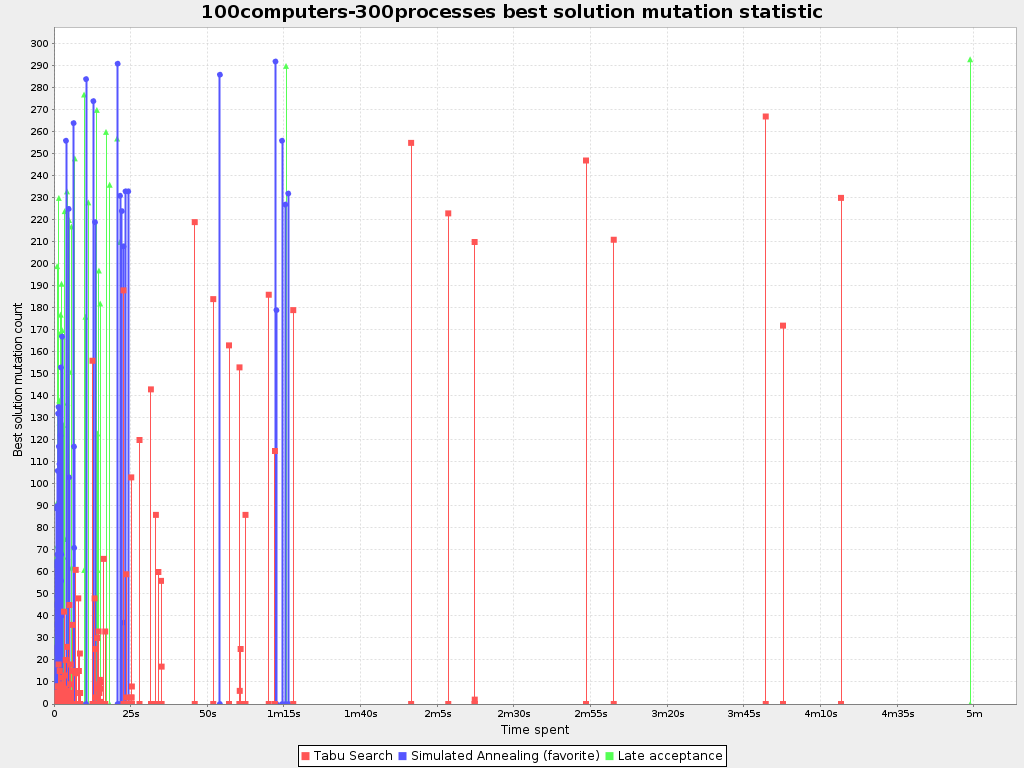

- 14.5.5. Best Solution Mutation Over Time Statistic (Graph And CSV)

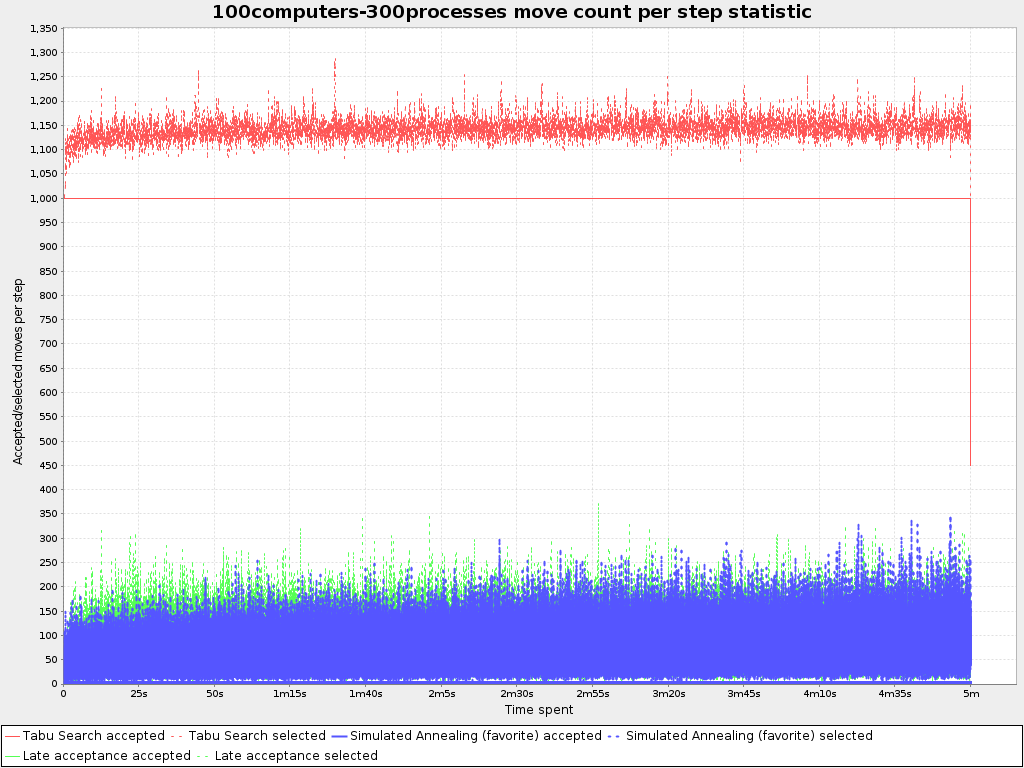

- 14.5.6. Move Count Per Step Statistic (Graph And CSV)

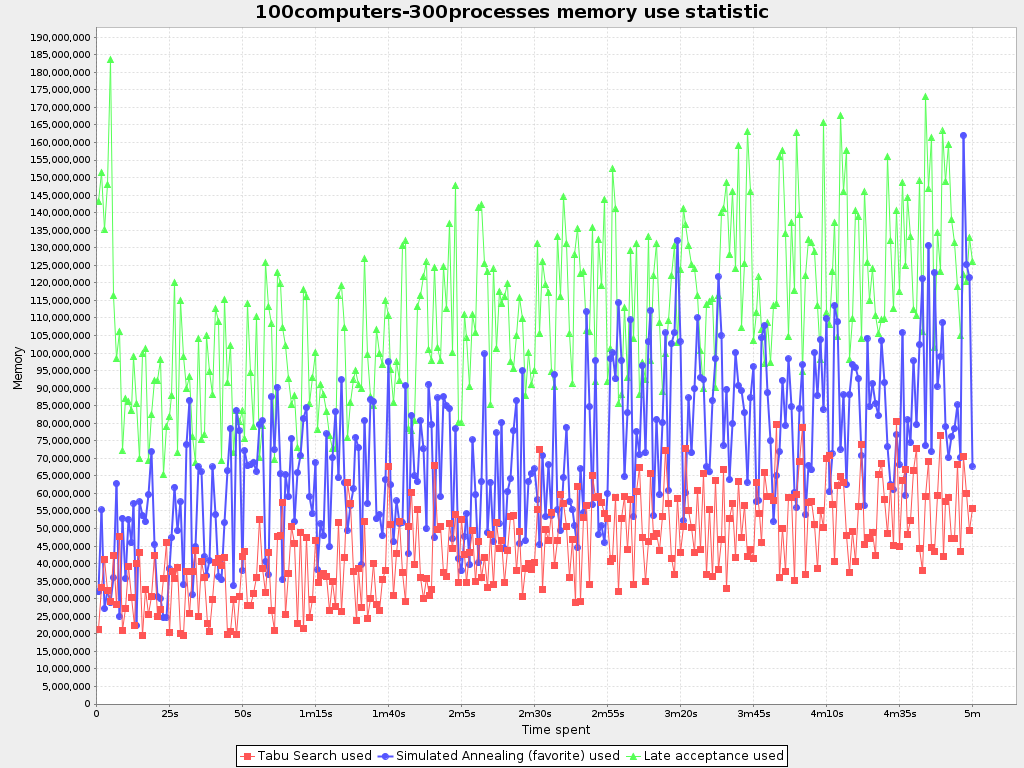

- 14.5.7. Memory Use Statistic (Graph And CSV)

- 14.6. Statistic Per Single Benchmark (Graph And CSV)

- 14.6.1. Enable A Single Statistic

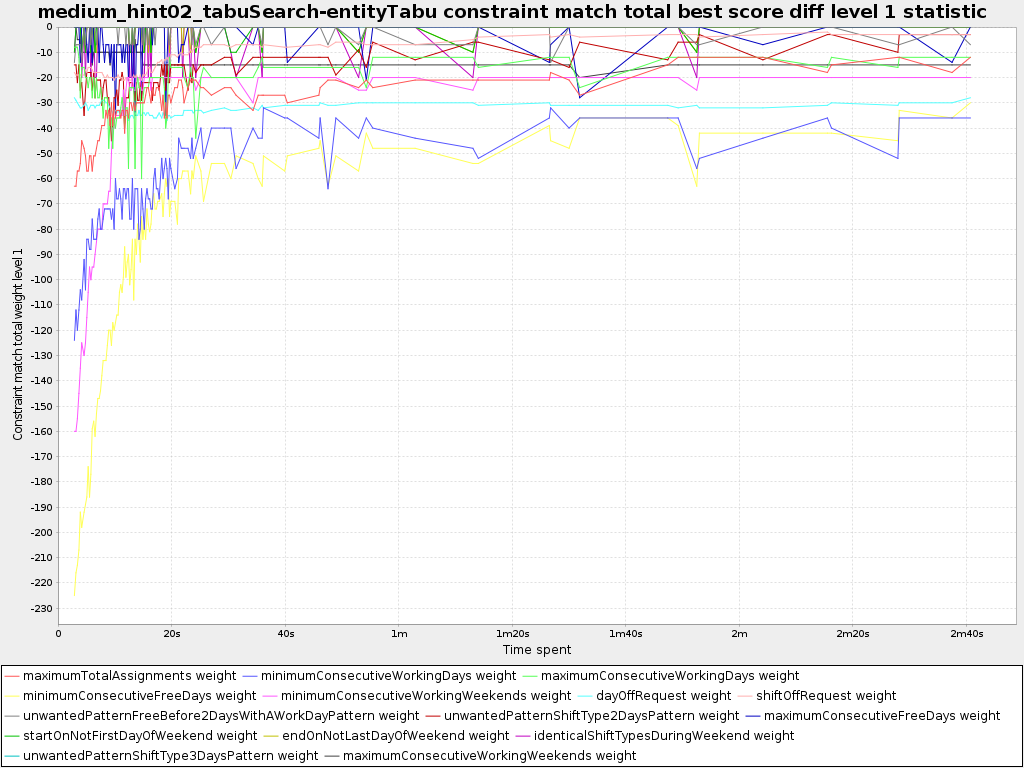

- 14.6.2. Constraint Match Total Best Score Over Time Statistic (Graph And CSV)

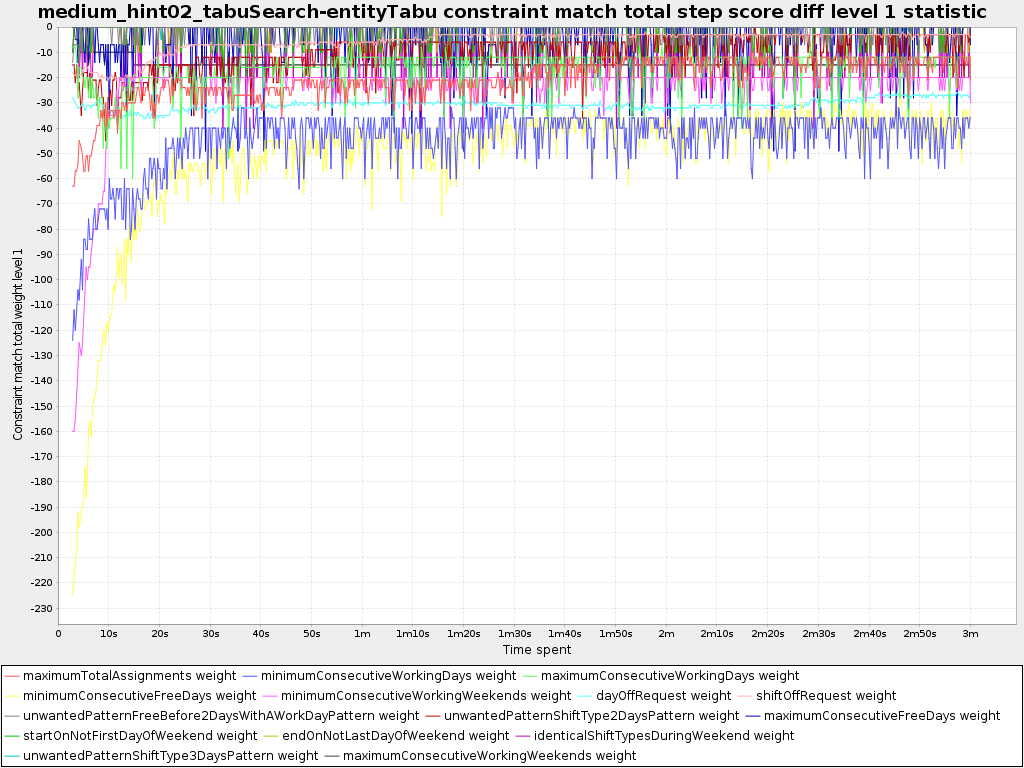

- 14.6.3. Constraint Match Total Step Score Over Time Statistic (Graph And CSV)

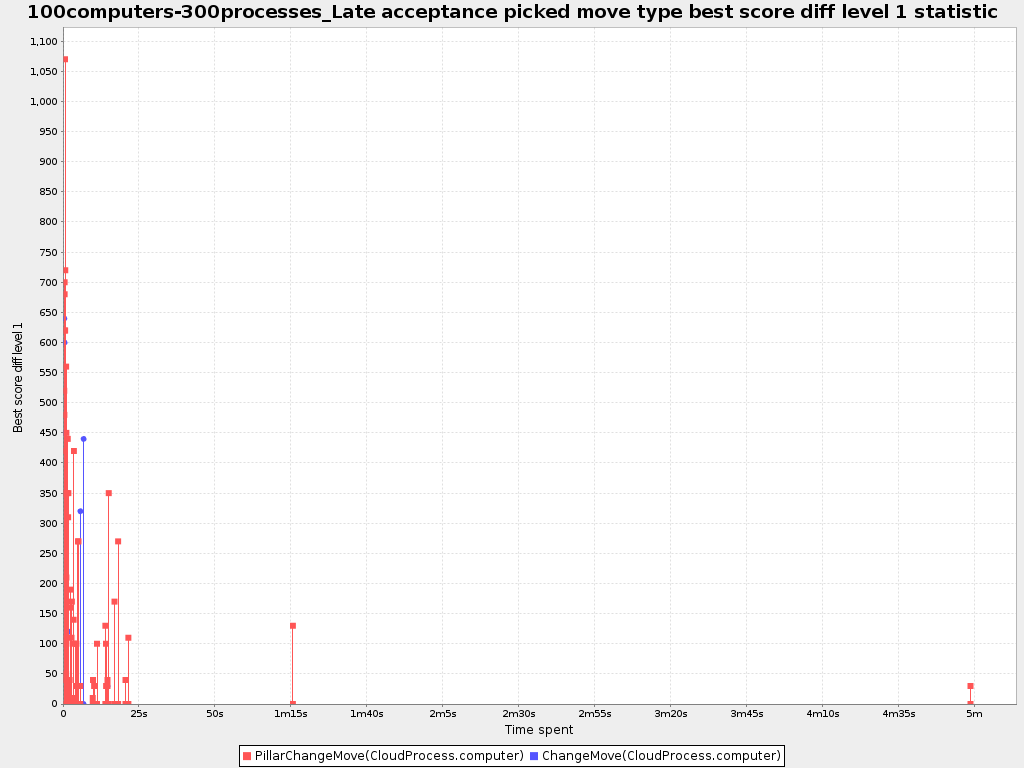

- 14.6.4. Picked Move Type Best Score Diff Over Time Statistic (Graph And CSV)

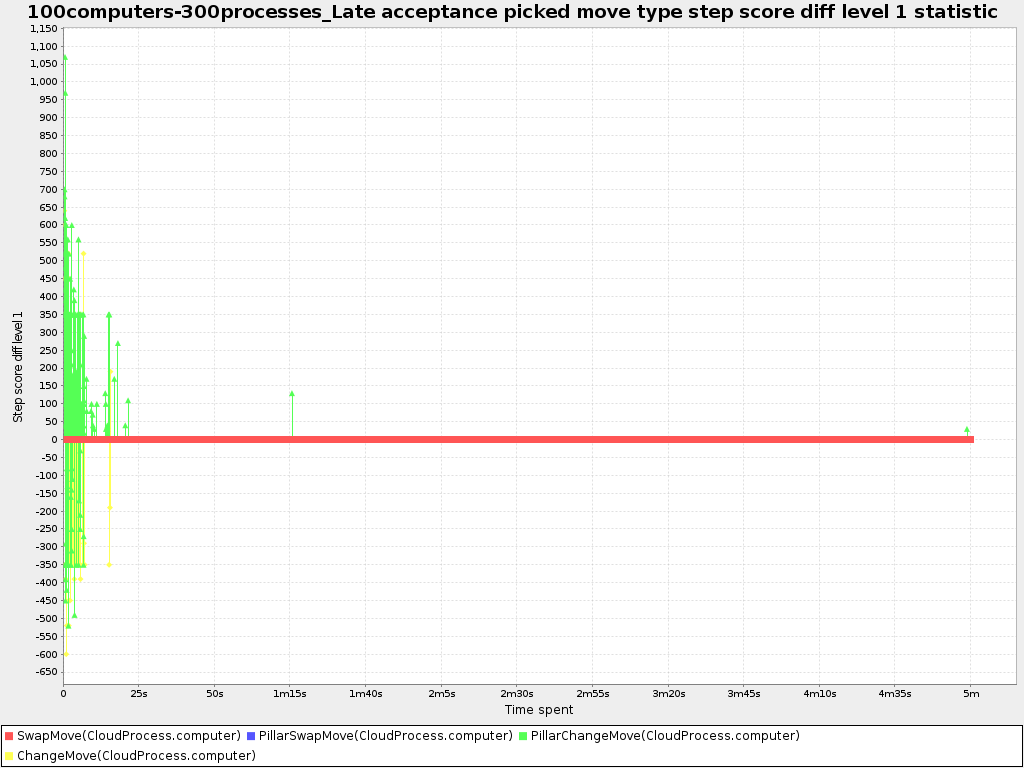

- 14.6.5. Picked Move Type Step Score Diff Over Time Statistic (Graph And CSV)

- 14.7. Advanced Benchmarking

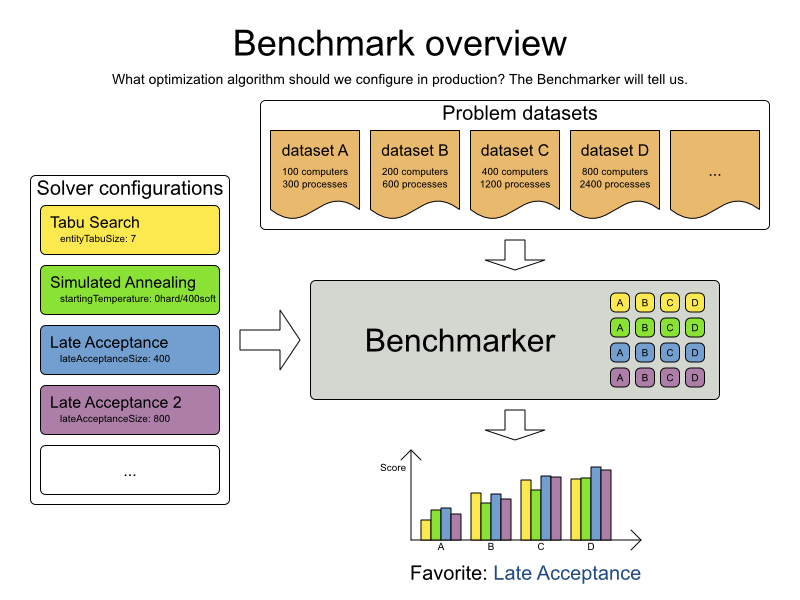

Planner supports several optimization algorithms, so you're probably wondering which is the best one? Although some optimization algorithms generally perform better than others, it really depends on your problem domain. Most solver phases have parameters which can be tweaked. Those parameters can influence the results a lot, even though most solver phases work pretty well out-of-the-box.

Luckily, Planner includes a benchmarker, which allows you to play out different solver phases with different settings against each other in development, so you can use the best configuration for your planning problem in production.

The benchmarker is in a separate artifact called optaplanner-benchmark.

If you use Maven, add a dependency in your pom.xml file:

<dependency>

<groupId>org.optaplanner</groupId>

<artifactId>optaplanner-benchmark</artifactId>

</dependency>This is similar for Gradle, Ivy and Buildr. The version must be exactly the same as the

optaplanner-core version used (which is automatically the case if you import

optaplanner-bom).

If you use ANT, you've probably already copied the required jars from the download zip's

binaries directory.

Build a PlannerBenchmark instance with a PlannerBenchmarkFactory.

Configure it with a benchmark configuration XML file, provided as a classpath resource:

PlannerBenchmarkFactory plannerBenchmarkFactory = PlannerBenchmarkFactory.createFromXmlResource(

"org/optaplanner/examples/nqueens/benchmark/nqueensBenchmarkConfig.xml");

PlannerBenchmark plannerBenchmark = plannerBenchmarkFactory.buildPlannerBenchmark();

plannerBenchmark.benchmark();A benchmark configuration file looks like this:

<?xml version="1.0" encoding="UTF-8"?>

<plannerBenchmark>

<benchmarkDirectory>local/data/nqueens</benchmarkDirectory>

<inheritedSolverBenchmark>

<problemBenchmarks>

...

<inputSolutionFile>data/nqueens/unsolved/32queens.xml</inputSolutionFile>

<inputSolutionFile>data/nqueens/unsolved/64queens.xml</inputSolutionFile>

</problemBenchmarks>

<solver>

...<!-- Common solver configuration -->

</solver>

</inheritedSolverBenchmark>

<solverBenchmark>

<name>Tabu Search</name>

<solver>

...<!-- Tabu Search specific solver configuration -->

</solver>

</solverBenchmark>

<solverBenchmark>

<name>Simulated Annealing</name>

<solver>

...<!-- Simulated Annealing specific solver configuration -->

</solver>

</solverBenchmark>

<solverBenchmark>

<name>Late Acceptance</name>

<solver>

...<!-- Late Acceptance specific solver configuration -->

</solver>

</solverBenchmark>

</plannerBenchmark>This PlannerBenchmark will try 3 configurations (Tabu Search, Simulated Annealing and

Late Acceptance) on 2 data sets (32queens and 64queens), so it will run 6 solvers.

Every <solverBenchmark> element contains a solver configuration and one or more

<inputSolutionFile> elements. It will run the solver configuration on each of those

unsolved solution files. The element name is optional, because it is generated if absent. The

inputSolutionFile is read by a SolutionFileIO (relative

to the working directory).

Note

Use a forward slash (/) as the file separator (for example in the element

<inputSolutionFile>). That will work on any platform (including Windows).

Do not use backslash (\) as the file separator: that breaks portability because it does

not work on Linux and Mac.

The benchmark report will be written in the directory specified the

<benchmarkDirectory> element (relative to the working directory).

Note

It's recommended that the benchmarkDirectory is a directory ignored for source control

and not cleaned by your build system. This way the generated files are not bloating your source control and they

aren't lost when doing a build. Usually that directory is called local.

If an Exception or Error occurs in a single benchmark, the entire

Benchmarker will not fail-fast (unlike everything else in Planner). Instead, the Benchmarker will continue to run

all other benchmarks, write the benchmark report and then fail (if there is at least 1 failing single benchmark).

The failing benchmarks will be clearly marked as such in the benchmark report.

To lower verbosity, the common parts of multiple <solverBenchmark> elements are

extracted to the <inheritedSolverBenchmark> element. Every property can still be

overwritten per <solverBenchmark> element. Note that inherited solver phases such as

<constructionHeuristic> or <localSearch> are not overwritten

but instead are added to the tail of the solver phases list.

The benchmarker needs to be able to read the input files to load a Solution. Also, it

might need to write the best Solution of each benchmark to an output file. For that it uses a

class that implements the SolutionFileIO interface:

public interface SolutionFileIO {

String getInputFileExtension();

String getOutputFileExtension();

Solution read(File inputSolutionFile);

void write(Solution solution, File outputSolutionFile);

}The SolutionFileIO interface is in the

optaplanner-persistence-common jar (which is a dependency of the

optaplanner-benchmark jar).

By default, a benchmarker uses a XStreamSolutionFileIO instance to read and write

solutions.

It's required to tell the benchmarker about your Solution class which is annotated with

XStream annotations:

<problemBenchmarks>

<xStreamAnnotatedClass>org.optaplanner.examples.nqueens.domain.NQueens</xStreamAnnotatedClass>

<inputSolutionFile>data/nqueens/unsolved/32queens.xml</inputSolutionFile>

...

</problemBenchmarks>Those input files need to have been written with a XStreamSolutionFileIO instance, not

just any XStream instance, because the XStreamSolutionFileIO uses a

customized XStream instance.

Warning

XStream (and XML in general) is a very verbose format. Reading or writing very large datasets in this

format can cause an OutOfMemoryError and performance degradation.

Alternatively, implement your own SolutionFileIO implementation and configure it with

the solutionFileIOClass element:

<problemBenchmarks>

<solutionFileIOClass>org.optaplanner.examples.machinereassignment.persistence.MachineReassignmentFileIO</solutionFileIOClass>

<inputSolutionFile>data/machinereassignment/import/model_a1_1.txt</inputSolutionFile>

...

</problemBenchmarks>It's recommended that output files can be read as input files, which also implies that

getInputFileExtension() and getOutputFileExtension() return the same

value.

Warning

A SolutionFileIO implementation must be thread-safe.

The benchmark configuration currently expects an <inputSolutionFile> element for

each dataset. There are 2 ways to deal with this if your dataset is in a database or another type of

repository:

Extract the datasets from the database and serialize them to a local file (for example as XML with

XStreamSolutionFileIO). Then use those files an<inputSolutionFile>elements.For each dataset, create a txt file that holds the unique id of the dataset. Write a custom

SolutionFileIOthat reads that identifier, connects to the database and extract the problem identified by that id. Configure those txt files as<inputSolutionFile>elements.

Note

Local files are always faster and don't require a network connection.

Without a warm up, the results of the first (or first few) benchmarks are not reliable, because they will have lost CPU time on HotSpot JIT compilation (and possibly DRL compilation too).

To avoid that distortion, the benchmarker can run some of the benchmarks for a specified amount of time, before running the real benchmarks. Generally, a warm up of 30 seconds suffices:

<plannerBenchmark>

...

<warmUpSecondsSpentLimit>30</warmUpSecondsSpentLimit>

...

</plannerBenchmark>Note

The warm up time budget does not include the time it takes to load the datasets. With large datasets, this can cause the warm up to run considerably longer than specified in the configuration.

To quickly configure and run a benchmark for typical solver configs, use a

solverBenchmarkBluePrint instead of solverBenchmarks:

<?xml version="1.0" encoding="UTF-8"?>

<plannerBenchmark>

<benchmarkDirectory>local/data/nqueens</benchmarkDirectory>

<warmUpSecondsSpentLimit>30</warmUpSecondsSpentLimit>

<inheritedSolverBenchmark>

<problemBenchmarks>

<xStreamAnnotatedClass>org.optaplanner.examples.nqueens.domain.NQueens</xStreamAnnotatedClass>

<inputSolutionFile>data/nqueens/unsolved/32queens.xml</inputSolutionFile>

<inputSolutionFile>data/nqueens/unsolved/64queens.xml</inputSolutionFile>

<problemStatisticType>BEST_SCORE</problemStatisticType>

</problemBenchmarks>

<solver>

<scanAnnotatedClasses/>

<scoreDirectorFactory>

<scoreDefinitionType>SIMPLE</scoreDefinitionType>

<scoreDrl>org/optaplanner/examples/nqueens/solver/nQueensScoreRules.drl</scoreDrl>

<initializingScoreTrend>ONLY_DOWN</initializingScoreTrend>

</scoreDirectorFactory>

<termination>

<minutesSpentLimit>1</minutesSpentLimit>

</termination>

</solver>

</inheritedSolverBenchmark>

<solverBenchmarkBluePrint>

<solverBenchmarkBluePrintType>EVERY_CONSTRUCTION_HEURISTIC_TYPE_WITH_EVERY_LOCAL_SEARCH_TYPE</solverBenchmarkBluePrintType>

</solverBenchmarkBluePrint>

</plannerBenchmark>The following SolverBenchmarkBluePrintTypes are supported:

EVERY_CONSTRUCTION_HEURISTIC_TYPE: Run every Construction Heuristic type (First Fit, First Fit Decreasing, Cheapest Insertion, ...).

EVERY_LOCAL_SEARCH_TYPE: Run every Local Search type (Tabu Search, Late Acceptance, ...) with the default Construction Heuristic.

EVERY_CONSTRUCTION_HEURISTIC_TYPE_WITH_EVERY_LOCAL_SEARCH_TYPE: Run every Construction Heuristic type with every Local Search type.

The best solution of each benchmark run can be written in the benchmarkDirectory. By

default, this is disabled, because the files are rarely used and considered bloat. Also, on large datasets,

writing the best solution of each single benchmark can take quite some time and memory (causing an

OutOfMemoryError), especially in a verbose format like XStream XML.

To write those solutions in the benchmarkDirectory, enable

writeOutputSolutionEnabled:

<problemBenchmarks>

...

<writeOutputSolutionEnabled>true</writeOutputSolutionEnabled>

...

</problemBenchmarks>Benchmark logging is configured like the Solver

logging.

To separate the log messages of each single benchmark run into a separate file, use the MDC with key singleBenchmark.name in

a sifting appender. For example with Logback in logback.xml:

<appender name="fileAppender" class="ch.qos.logback.classic.sift.SiftingAppender">

<discriminator>

<key>singleBenchmark.name</key>

<defaultValue>app</defaultValue>

</discriminator>

<sift>

<appender name="fileAppender.${singleBenchmark.name}" class="...FileAppender">

<file>local/log/optaplannerBenchmark-${singleBenchmark.name}.log</file>

...

</appender>

</sift>

</appender>After running a benchmark, an HTML report will be written in the benchmarkDirectory with

the index.html filename. Open it in your browser. It has a nice overview of your benchmark

including:

Summary statistics: graphs and tables

Problem statistics per

inputSolutionFile: graphs and CSVEach solver configuration (ranked): Handy to copy and paste

Benchmark information: settings, hardware, ...

Note

Graphs are generated by the excellent JFreeChart library.

The HTML report will use your default locale to format numbers. If you share the benchmark report with

people from another country, consider overwriting the locale accordingly:

<plannerBenchmark>

...

<benchmarkReport>

<locale>en_US</locale>

</benchmarkReport>

...

</plannerBenchmark>The benchmark report automatically ranks the solvers. The Solver with rank

0 is called the favorite Solver: it performs best overall, but it might not

be the best on every problem. It's recommended to use that favorite Solver in

production.

However, there are different ways of ranking the solvers. Configure it like this:

<plannerBenchmark>

...

<benchmarkReport>

<solverRankingType>TOTAL_SCORE</solverRankingType>

</benchmarkReport>

...

</plannerBenchmark>The following solverRankingTypes are supported:

TOTAL_SCORE(default): Maximize the overall score, so minimize the overall cost if all solutions would be executed.WORST_SCORE: Minimize the worst case scenario.TOTAL_RANKING: Maximize the overall ranking. Use this if your datasets differ greatly in size or difficulty, producing a difference inScoremagnitude.

Solvers with at least one failed single benchmark do not get a ranking.

Solvers with not fully initialized solutions are ranked worse.

You can also use a custom ranking, by implementing a Comparator:

<benchmarkReport>

<solverRankingComparatorClass>...TotalScoreSolverRankingComparator</solverRankingComparatorClass>

</benchmarkReport>Or by implementing a weight factory:

<benchmarkReport>

<solverRankingWeightFactoryClass>...TotalRankSolverRankingWeightFactory</solverRankingWeightFactoryClass>

</benchmarkReport>Shows the best score per inputSolutionFile for each solver configuration.

Useful for visualizing the best solver configuration.

Shows the best score per problem scale for each solver configuration.

Useful for visualizing the scalability of each solver configuration.

Note

The problem scale will report 0 if any @ValueRangeProvider method

signature returns ValueRange (instead of CountableValueRange or

Collection).

Shows the best score distribution per inputSolutionFile for each solver

configuration.

Useful for visualizing the reliability of each solver configuration.

Enable statistical benchmarking to use this summary.

Shows the winning score difference per inputSolutionFile for each solver configuration.

The winning score difference is the score difference with the score of the winning solver configuration for that

particular inputSolutionFile.

Useful for zooming in on the results of the best score summary.

Shows the return on investment (ROI) per inputSolutionFile for each solver configuration

if you'd upgrade from the worst solver configuration for that particular

inputSolutionFile.

Useful for visualizing the return on investment (ROI) to decision makers.

Shows the score calculation speed: the average calculation count per second per problem scale for each solver configuration.

Useful for comparing different score calculators and/or score rule implementations (presuming that the solver configurations do not differ otherwise). Also useful to measure the scalability cost of an extra constraint.

Shows the time spent per inputSolutionFile for each solver configuration. This is

pointless if it's benchmarking against a fixed time limit.

Useful for visualizing the performance of construction heuristics (presuming that no other solver phases are configured).

Shows the time spent per problem scale for each solver configuration. This is pointless if it's benchmarking against a fixed time limit.

Useful for extrapolating the scalability of construction heuristics (presuming that no other solver phases are configured).

Shows the best score per time spent for each solver configuration. This is pointless if it's benchmarking against a fixed time limit.

Useful for visualizing trade-off between the best score versus the time spent for construction heuristics (presuming that no other solver phases are configured).

The benchmarker supports outputting problem statistics as graphs and CSV (comma separated values) files to

the benchmarkDirectory. To configure one, add a problemStatisticType

line:

<plannerBenchmark>

<benchmarkDirectory>local/data/nqueens/solved</benchmarkDirectory>

<inheritedSolverBenchmark>

<problemBenchmarks>

...

<problemStatisticType>BEST_SCORE</problemStatisticType>

<problemStatisticType>CALCULATE_COUNT_PER_SECOND</problemStatisticType>

</problemBenchmarks>

...

</inheritedSolverBenchmark>

...

</plannerBenchmark>Multiple problemStatisticType elements are allowed.

Note

These statistic per dataset can slow down the solver noticeably, which affects the benchmark results. That's why they are optional and not enabled by default.

The non-optional summary statistics cannot slow down the solver noticeably.

The following types are supported:

To see how the best score evolves over time, add:

<problemBenchmarks>

...

<problemStatisticType>BEST_SCORE</problemStatisticType>

</problemBenchmarks>Note

A time gradient based algorithm (such as Simulated Annealing) will have a different statistic if it's run with a different time limit configuration. That's because this Simulated Annealing implementation automatically determines its velocity based on the amount of time that can be spent. On the other hand, for the Tabu Search and Late Annealing, what you see is what you'd get.

The best score over time statistic is very useful to detect abnormalities, such as a potential score trap which gets the solver temporarily stuck in a local optima.

To see how the step score evolves over time, add:

<problemBenchmarks>

...

<problemStatisticType>STEP_SCORE</problemStatisticType>

</problemBenchmarks>Compare the step score statistic with the best score statistic (especially on parts for which the best score flatlines). If it hits a local optima, the solver should take deteriorating steps to escape it. But it shouldn't deteriorate too much either.

Warning

The step score statistic has been seen to slow down the solver noticeably due to GC stress, especially for fast stepping algorithms (such as Simulated Annealing and Late Acceptance).

To see how fast the scores are calculated, add:

<problemBenchmarks>

...

<problemStatisticType>CALCULATE_COUNT_PER_SECOND</problemStatisticType>

</problemBenchmarks>Note

The initial high calculate count is typical during solution initialization: it's far easier to calculate the score of a solution if only a handful planning entities have been initialized, than when all the planning entities are initialized.

After those few seconds of initialization, the calculate count is relatively stable, apart from an occasional stop-the-world garbage collector disruption.

To see how much each new best solution differs from the previous best solution, by counting the number of planning variables which have a different value (not including the variables that have changed multiple times but still end up with the same value), add:

<problemBenchmarks>

...

<problemStatisticType>BEST_SOLUTION_MUTATION</problemStatisticType>

</problemBenchmarks>Use Tabu Search - an algorithm that behaves like a human - to get an estimation on how difficult it would be for a human to improve the previous best solution to that new best solution.

To see how the selected and accepted move count per step evolves over time, add:

<problemBenchmarks>

...

<problemStatisticType>MOVE_COUNT_PER_STEP</problemStatisticType>

</problemBenchmarks>Warning

This statistic has been seen to slow down the solver noticeably due to GC stress, especially for fast stepping algorithms (such as Simulated Annealing and Late Acceptance).

A single statistic is a statics for 1 dataset for 1 solver configuration. Unlike a problem statistic, it does not aggregate over solver configurations.

The benchmarker supports outputting single statistics as graphs and CSV (comma separated values) files to

the benchmarkDirectory. To configure one, add a singleStatisticType

line:

<plannerBenchmark>

<benchmarkDirectory>local/data/nqueens/solved</benchmarkDirectory>

<inheritedSolverBenchmark>

<problemBenchmarks>

...

<problemStatisticType>...</problemStatisticType>

<singleStatisticType>PICKED_MOVE_TYPE_BEST_SCORE_DIFF</singleStatisticType>

</problemBenchmarks>

...

</inheritedSolverBenchmark>

...

</plannerBenchmark>Multiple singleStatisticType elements are allowed.

Note

These statistic per single benchmark can slow down the solver noticeably, which affects the benchmark results. That's why they are optional and not enabled by default.

The following types are supported:

To see which constraints are matched in the best score (and how much) over time, add:

<problemBenchmarks>

...

<singleStatisticType>CONSTRAINT_MATCH_TOTAL_BEST_SCORE</singleStatisticType>

</problemBenchmarks>Requires the score calculation to support constraint matches. Drools score calculation supports constraint matches automatically, but incremental Java score calculation requires more work.

Warning

The constraint match total statistics has been seen to affect the solver noticeably.

To see which constraints are matched in the step score (and how much) over time, add:

<problemBenchmarks>

...

<singleStatisticType>CONSTRAINT_MATCH_TOTAL_STEP_SCORE</singleStatisticType>

</problemBenchmarks>Requires the score calculation to support constraint matches. Drools score calculation supports constraint matches automatically, but incremental Java score calculation requires more work.

Warning

The constraint match total statistics has been seen to affect the solver noticeably.

To see which move types improve the best score (and how much) over time, add:

<problemBenchmarks>

...

<singleStatisticType>PICKED_MOVE_TYPE_BEST_SCORE_DIFF</singleStatisticType>

</problemBenchmarks>To see how much each winning step affects the step score over time, add:

<problemBenchmarks>

...

<singleStatisticType>PICKED_MOVE_TYPE_STEP_SCORE_DIFF</singleStatisticType>

</problemBenchmarks>If you have multiple processors available on your computer, you can run multiple benchmarks in parallel on multiple threads to get your benchmarks results faster:

<plannerBenchmark>

...

<parallelBenchmarkCount>AUTO</parallelBenchmarkCount>

...

</plannerBenchmark>Warning

Running too many benchmarks in parallel will affect the results of benchmarks negatively. Leave some processors unused for garbage collection and other processes.

We tweak parallelBenchmarkCount AUTO to maximize the reliability

and efficiency of the benchmark results.

The following parallelBenchmarkCounts are supported:

1(default): Run all benchmarks sequentially.AUTO: Let Planner decide how many benchmarks to run in parallel. This formula is based on experience. It's recommended to prefer this over the other parallel enabling options.Static number: The number of benchmarks to run in parallel.

<parallelBenchmarkCount>2</parallelBenchmarkCount>JavaScript formula: Formula for the number of benchmarks to run in parallel. It can use the variable

availableProcessorCount. For example:<parallelBenchmarkCount>(availableProcessorCount / 2) + 1</parallelBenchmarkCount>

Note

The parallelBenchmarkCount is always limited to the number of available processors.

If it's higher, it will be automatically decreased.

Note

If you have a computer with slow or unreliable cooling, increasing the

parallelBenchmarkCount above 1 (even on AUTO) may overheat your

CPU.

The sensors command can help you detect if this is the case. It is available in the

package lm_sensors or lm-sensors in most Linux distributions. There are

several freeware tools available for Windows too.

Note

In the future, we will also support multi-JVM benchmarking. This feature is independent of multi-threaded solving or multi-JVM solving.

To minimize the influence of your environment and the Random Number Generator on the benchmark results, configure the number of times each single benchmark run is repeated. The results of those runs are statistically aggregated. Each individual result is also visible in the report, as well as plotted in the best score distribution summary.

Just add a <subSingleCount> element to an <inheritedSolverBenchmark> element or in a

<solverBenchmark> element:

<?xml version="1.0" encoding="UTF-8"?>

<plannerBenchmark>

...

<inheritedSolverBenchmark>

...

<solver>

...

</solver>

<subSingleCount>10<subSingleCount>

</inheritedSolverBenchmark>

...

</plannerBenchmark>The subSingleCount defaults to 1 (so no statistical

benchmarking).

Note

If subSingleCount is higher than 1, the benchmarker will

automatically use a different Random seed for every sub single run, without losing

reproducibility (for each sub single index) in EnvironmentMode

REPRODUCIBLE and lower.

Matrix benchmarking is benchmarking a combination of value sets. For example: benchmark 4

entityTabuSize values (5, 7, 11 and

13) combined with 3 acceptedCountLimit values (500,

1000 and 2000), resulting in 12 solver configurations.

To reduce the verbosity of such a benchmark configuration, you can use a Freemarker template for the benchmark configuration instead:

<plannerBenchmark>

...

<inheritedSolverBenchmark>

...

</inheritedSolverBenchmark>

<#list [5, 7, 11, 13] as entityTabuSize>

<#list [500, 1000, 2000] as acceptedCountLimit>

<solverBenchmark>

<name>entityTabuSize ${entityTabuSize} acceptedCountLimit ${acceptedCountLimit}</name>

<solver>

<localSearch>

<unionMoveSelector>

<changeMoveSelector/>

<swapMoveSelector/>

</unionMoveSelector>

<acceptor>

<entityTabuSize>${entityTabuSize}</entityTabuSize>

</acceptor>

<forager>

<acceptedCountLimit>${acceptedCountLimit}</acceptedCountLimit>

</forager>

</localSearch>

</solver>

</solverBenchmark>

</#list>

</#list>

</plannerBenchmark>And build it with the class PlannerBenchmarkFactory:

PlannerBenchmarkFactory plannerBenchmarkFactory = PlannerBenchmarkFactory.createFromFreemarkerXmlResource(

"org/optaplanner/examples/cloudbalancing/benchmark/cloudBalancingBenchmarkConfigTemplate.xml.ftl");

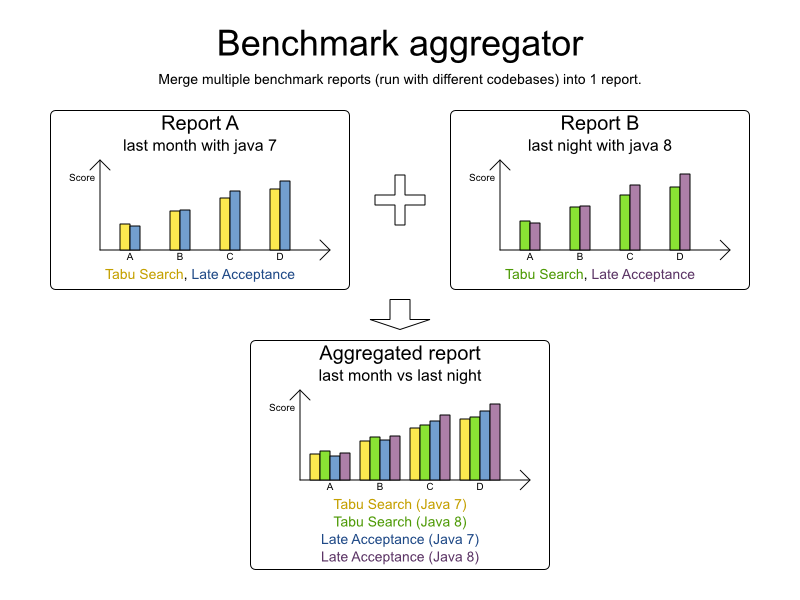

PlannerBenchmark plannerBenchmark = plannerBenchmarkFactory.buildPlannerBenchmark();The BenchmarkAggregator takes 1 or more existing benchmarks and merges them into new

benchmark report, without actually running the benchmarks again.

This is useful to:

Report on the impact of code changes: Run the same benchmark configuration before and after the code changes, then aggregate a report.

Report on the impact of dependency upgrades: Run the same benchmark configuration before and after upgrading the dependency, then aggregate a report.

Condense a too verbose report: Select only the interesting solver benchmarks from the existing report. This especially useful on template reports to make the graphs readable.

Partially rerun a benchmark: Rerun part of an existing report (for example only the failed or invalid solvers), then recreate the original intended report with the new values.

To use it, provide a PlannerBenchmarkFactory to the

BenchmarkAggregatorFrame to display the GUI:

public static void main(String[] args) {

PlannerBenchmarkFactory plannerBenchmarkFactory = PlannerBenchmarkFactory.createFromXmlResource(

"org/optaplanner/examples/nqueens/benchmark/nqueensBenchmarkConfig.xml");

BenchmarkAggregatorFrame.createAndDisplay(plannerBenchmarkFactory);

}Warning

Despite that it uses a benchmark configuration as input, it ignores all elements of that configuration,

except for the elements <benchmarkDirectory> and

<benchmarkReport>.

In the GUI, select the interesting benchmarks and click the button to generate the report.

Note

All the input reports which are being merged should have been generated with the same Planner version

(excluding hotfix differences) as the BenchmarkAggregator. Using reports from different

Planner major or minor versions are not guaranteed to succeed and deliver correct information, because the

benchmark report data structure often changes.